TL;DR

Yes. And we proved it at an AWS hackathon.

At a recent iGaming-focused hackathon hosted by AWS in London, a cross-functional CreateFuture team built a fully working agentic AI architecture in a single day.

Invited through our AWS partnership, a squad of five iGaming and betting experts applied AWS AI tooling to solve a genuine client problem. Not a slide deck. Not a concept. A near-production system running on AWS Bedrock, handling real data.

We built a configurable agentic pipeline that tackles editorial overhead, one of iGaming's biggest operational headaches. When conditions change mid-event, like a weather shift at a horse race or a player dropping out of a starting lineup, editorial teams have to manually research and rewrite commentary in real time. Our solution uses dynamic, multi-agent workflows to consume market events and transform them into finished editorial pieces, with manual review built in before anything is published. The architecture goes well beyond editorial though, and the same pipeline could be configured for translation, pricing analysis, live data processing and more.

The pipeline could be configured for:

The team of five experts, from across disciplines, was brought together by Hassan Peymani, Head of iGaming, through our AWS partnership. The team on the ground was: Neha Prakash, Cloud Engineer; Nick Callaghan, Lead Product Consultant; Jessie Zhu, Software Engineering Manager; Bryan Galvin, Lead Engineer; and Thomas Morley-Johnson, Principal Consultant.

AI in iGaming is moving beyond code completion

SOFTSWISS’ 2026 iGaming Trends report found that 30% of engineers already use AI tools for code completion, producing a 10–15% increase in development speed. That's a useful gain. But at the hackathon, we weren't just using AI to write code faster. We used it to capture design conversations, generate a full specification, and build a working architecture. AI wasn't an add-on to the process. It was the process and it meant we moved at incredible speed.

iGaming operators are still paying the price for legacy overhead

The problem the team tackled is a common one in iGaming. Operational teams, whether they're in responsible gaming, payments, fraud or editorial, spend huge amounts of time reacting to system events manually. Something happens, someone gets notified, someone has to act. It's slow, expensive and doesn't scale.

This particular challenge focused on editorial overhead. iGaming companies need content produced quickly, often in response to live events, like changing weather conditions or team lineups. But the research-to-publication pipeline is bogged down by manual processes and legacy systems that weren't designed for the speed operators now need.

"We picked this because it's a challenge a current client has," says Nick Callaghan, Product Manager at CreateFuture. "Having something tangible that a client can see and understand how it might work is more useful than any theoretical exercise."

Spec first, code second. And AI changed who did what.

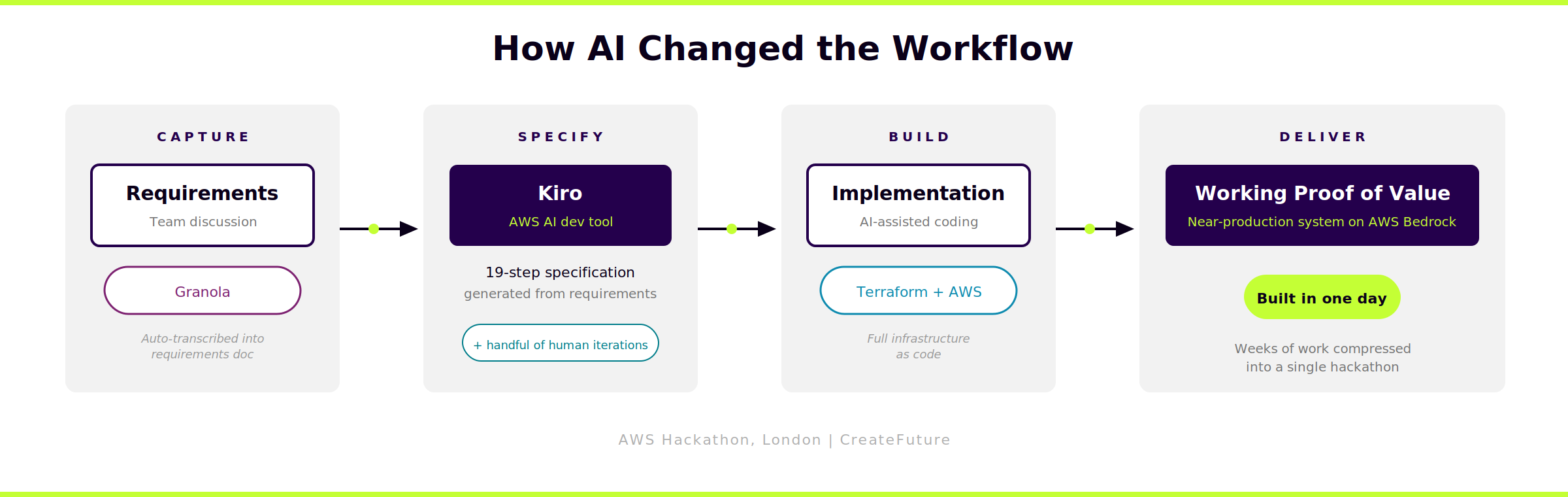

We kept AI central to our process and used tools at every stage. Granola ran in the room, automatically transcribing our requirements discussion and producing a requirements document. We fed that into Kiro, AWS's AI development tool, which analysed our requirements and produced a detailed 19-step specification. That gave us a powerful starting point to take into implementation with only a handful of human iterations needed.

Something interesting happened with roles on the day. The software engineer was involved in shaping the specification. The product manager was able to contribute directly to the coding. AI didn't replace anyone's expertise. It made the team more flexible, so people could contribute where they added the most value rather than staying inside traditional role boundaries.

This was a cross-functional team using AI to work in the way that made the most sense for the problem, with product thinking driving the implementation and engineering thinking driving the spec. That's what AI-native delivery looks like in practice.

.png?width=2400&height=1240&name=agentic-pipeline-architecture-landscape%20(1).png)

An agentic pipeline that goes well beyond editorial

What the team built is an agentic ingestion pipeline with four stages: ingest, transform, manage, publish. Data comes in from any source via an AWS Lambda function, gets routed to an AI agent running on AWS Bedrock, gets processed according to its instructions, and the output is pushed to a queue for a third party to pick up. The whole thing is built in Terraform and could be deployed to production with minimal hardening.

The architecture is fully configurable. On the day, the team demonstrated it summarising data into editorial content and translating English text into Spanish. But the design is intentionally extensible. The same pipeline could handle pricing analysis, data modelling, or processing live sports feeds for pattern matching and probability analysis.

What matters here isn't just the AI. It's that we built a full, working platform in a day. Not a prototype to demo and forget. A proof of value that an operator could take, configure and run.

"The prototype we produced in a day is experimental but functional," says Callaghan. "It may not be a complete working system, but it's easily weeks' worth of work if you're not using AI."

What this means if you're running an iGaming platform

iGaming operators are looking for speed and modernisation. New AI-focused operators are entering the market. Prediction markets are growing. Everyone wants to be first to the next opportunity. But most established operators are carrying 20 years of technical debt that slows everything down.

Agentic AI offers a way to reduce operational overhead without ripping out existing systems. It sits alongside what you already have, handles the repetitive work, and frees your teams to focus on the decisions that actually need a human. The architecture we built in a day is a working example of how that can start.

If your iGaming team is spending more time reacting to events than acting on them, this is the kind of thing we build. Want to see what this pipeline looks like configured for your workflows? Let's talk.

FAQs

What is an agentic AI architecture?

An agentic AI architecture is a system where AI agents operate with defined roles, tools and instructions to complete tasks with minimal human intervention. Unlike simple chatbot or autocomplete AI, agentic systems can receive data, make decisions based on their instructions, use tools to gather additional information, and take actions. In iGaming, this means AI agents can handle operational workflows like content generation, data processing, or event response that would otherwise require manual effort from operations teams.

How fast can you prototype agentic AI for iGaming?

Using spec-driven development and AI-assisted coding, CreateFuture built an agentic AI proof of value in a single day at an AWS hackathon. The speed demonstrates what's possible when AI tooling is embedded across the full workflow, not just code completion. A production-ready system would still require security hardening, testing and iteration, but the approach dramatically compresses the early stages of design and prototyping, giving teams and clients something tangible to evaluate and build on far sooner than traditional methods allow.

What AWS services are used in agentic iGaming workflows?

The architecture built by CreateFuture uses AWS Bedrock for the AI agent layer, AWS Lambda for data ingestion, and Amazon SQS for message queuing between pipeline stages. The entire infrastructure is defined in Terraform for repeatable deployment. AWS Bedrock provides enterprise-grade large language model access, allowing teams to define custom agents with specific instructions and tools suited to iGaming operational needs.

Can agentic AI work alongside existing iGaming systems?

Yes. The pipeline architecture is designed as a pull workflow that ingests data from any source, processes it through an AI agent, and outputs the result to a third party. This means it can sit alongside existing systems without requiring a full platform rebuild. For iGaming operators carrying legacy technical debt, this approach reduces operational overhead incrementally, starting with high-volume, repetitive workflows like editorial content, translations, or data processing.

Meet the author

Thomas Morley-Johnson is Principal Consultant at CreateFuture. He specialises in iGaming platform strategy and AI-led delivery, with a background spanning software engineering, architecture and product-focused transformation across betting and gaming.

.png?width=400&height=400&name=Untitled%20design%20(6).png)

Industry Insights

Explore the latest thinking from our industry and tech experts.

AI in UK wealth and pensions: 5 themes reshaping customer experience

Why do AI experiments fail and how do you scale AI-native delivery?

Empowering Women in Tech: Key Takeaways from CreateFuture's Recent Panel Discussion